How vCluster Solves The Multi-Tenancy Compliance Dilemma

veröffentlicht am 17.03.2026 von Jannis Schoormann

Compliance frameworks such as ISO 27001, SOC 2 and PCI-DSS provide little room for interpretation with regard to tenant isolation. In Kubernetes, this has traditionally meant having to make a difficult choice: either spin up separate clusters for every team or workload, or rely on namespace separation. vCluster offers an alternative to these two approaches. We will look at where conventional solutions fall short and how vCluster addresses these shortcomings.

The Hidden Cost of Compliance in Kubernetes

The adoption of Kubernetes in enterprises is accelerating. However, if your organization operates under strict regulatory requirements such as ISO 27001, SOC 2, PCI-DSS and HIPAA, this pace can create real challenges. How can you provide development teams with the necessary autonomy without compromising your compliance status?

For many organizations, multi-tenancy is where this tension becomes real. You need to share infrastructure efficiently, but compliance frameworks demand isolation, access control and auditability. The architecture you choose will determine whether you can deliver on both or end up sacrificing one for the other.

The Compliance Challenge in Kubernetes

The specific frameworks vary, but the underlying expectations for shared infrastructure are consistent:

- ISO 27001 requires strict access controls and environment segregation.

- SOC 2 mandates logical separation with clearly defined access boundaries.

- PCI-DSS demands network segmentation and isolation of systems touching cardholder data.

- HIPAA requires safeguards that keep sensitive health data isolated and access-controlled.

Different frameworks, same fundamental requirements: isolation, access control, and auditability. The question is what level of isolation your specific compliance context demands, and which Kubernetes architecture can deliver it.

The Established Approaches

It is important to be clear about the capabilities and limitations of existing multi-tenancy models.

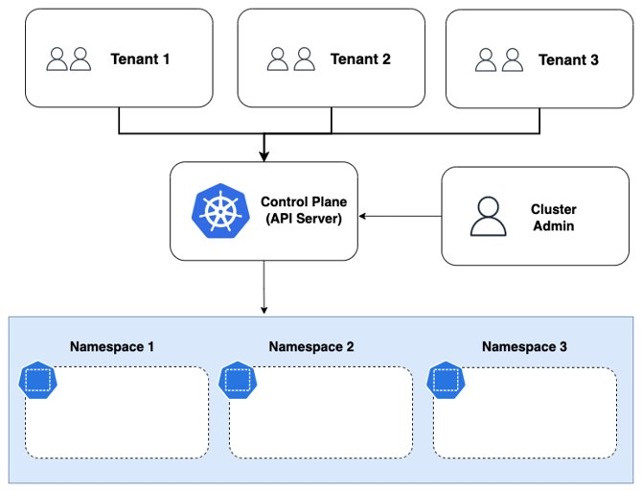

Namespace-as-a-Service (NaaS)

In a NaaS model, teams share a cluster and are given dedicated namespaces. Isolation is enforced through RBAC (Role-Based Access Control), network policies, resource quotas and policy engines such as Kyverno. This covers a significant number of multi-tenancy use cases, including many regulated environments. With the correct configuration, NaaS can provide robust access control and network segmentation. Using dedicated node pools with taints/tolerations can even provide compute-level isolation.

The control plane is where NaaS reaches its limits. All tenants share a single API server and a single set of cluster-scoped resources: CRDs, ClusterRoles and admission webhooks. Although RBAC mitigates this issue, it operates within the shared control plane and remains vulnerable to misconfiguration. Audit logs are shared between tenants, requiring filtering and correlation. This makes it difficult to maintain compliance in scenarios that require environment-level segregation, rather than just access controls within a shared environment.

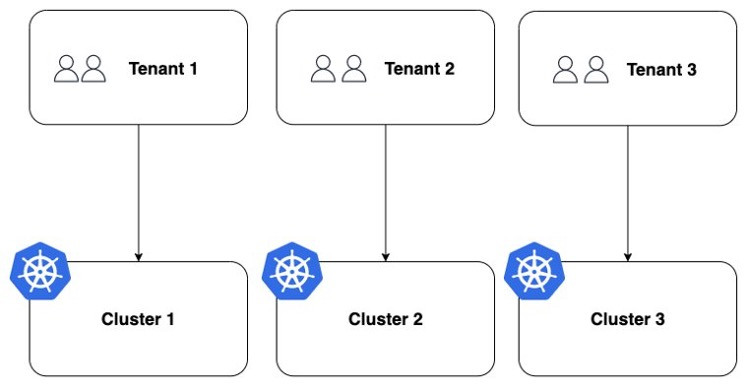

Cluster-as-a-Service (CaaS)

At the other end of the spectrum, each tenant gets a fully dedicated cluster. From a compliance standpoint, this is the simplest model: every boundary is hard. However, there are significant operational trade-offs. Infrastructure costs increase with the number of tenants. Each cluster requires its own lifecycle management, including upgrades, patching, monitoring and policy enforcement. Although fleet management tools can help, the overhead is real and grows with every additional cluster.

The Gap

Both models are viable, and one of them is the right answer for many organisations. However, there are compliance scenarios where neither is ideal. You require stronger isolation than NaaS provides, particularly at the control plane level. However, setting up dedicated clusters for each tenant is either impractical from an operational perspective or too costly. vCluster is precisely designed to fill this gap.

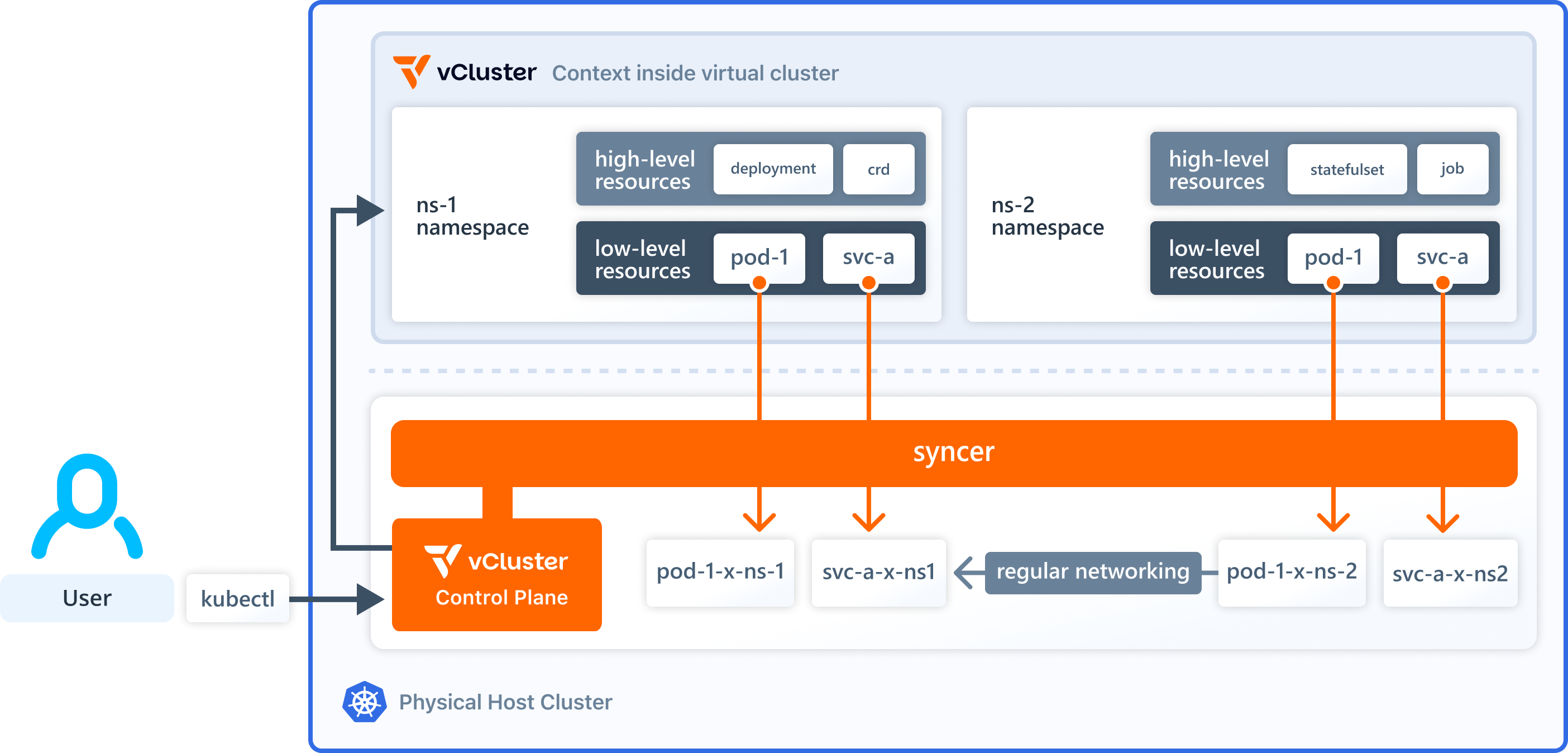

How vCluster Bridges the Gap

vCluster is an open-source technology that provisions fully functional virtual Kubernetes clusters inside a host cluster. Each virtual cluster gets its own dedicated API server, its own control plane, and its own set of cluster-scoped resources. From the tenant's perspective, the environment is indistinguishable from a dedicated cluster. At the infrastructure level, workloads are scheduled on shared host nodes.

This architecture directly targets the control plane limitation of NaaS while avoiding the operational weight of CaaS. But rather than making broad claims, let's look at exactly how it maps to what compliance frameworks require.

How vCluster Maps to Compliance Requirements

What makes vCluster compelling for regulated environments isn't the technology itself. It's how directly the architecture maps to what compliance frameworks actually require.

Isolation and Segregation

Each virtual cluster has its own API server and control plane. Cross-tenant API access is not permitted. Tenants cannot see, modify or interfere with each other's cluster-scoped resources. This directly addresses the segregation requirements in ISO 27001. It also addresses the logical separation SOC 2 mandates. It achieves this at a level that's difficult to achieve with namespace boundaries alone.

Access Control

Each virtual cluster has its own RBAC. Roles, bindings and service accounts are entirely scoped to the virtual cluster, preventing privilege leakage across tenant boundaries. NaaS also provides RBAC at the tenant level through namespace-scoped roles. However, in a vCluster model, the isolation is structural rather than configurational, as there is no shared control plane where a misconfigured ClusterRole could inadvertently grant cross-tenant access.

Auditability

As each virtual cluster has its own API server, audit logs are separated by tenant by default. This makes it easy to show auditors exactly who accessed which resources, when, and in which environment, without having to filter and correlate logs as you would with a shared API server.

Network Segmentation

When combined with network policies that are enforced at the host cluster level, vCluster supports the network segmentation that is required by PCI-DSS. By default, traffic between virtual clusters can be restricted, thereby keeping workloads isolated at the network layer. It is worth noting that, in a NaaS model, network policies achieve similar results. vCluster does not fundamentally change the story of network isolation, but rather layers it on top of stronger control plane separation.

Compute Isolation

Some compliance scenarios require compute-level separation, ensuring that a tenant's workloads never share the same underlying nodes as those of other tenants. vCluster supports this by mapping virtual clusters to dedicated node pools, known as Private Nodes.

It is important to note that compute isolation is not unique to vCluster. Dedicated node pools with taints and tolerations, or dynamic provisioners such as Karpenter, can achieve the same thing in a NaaS setup. However, the difference is that vCluster combines both compute isolation and control plane separation in a single model, which is important when your compliance requirements demand both.

Where vCluster Fits in the Bigger Picture

A side-by-side comparison of the three approaches provides a clear picture:

Requirement | NaaS | vCluster | CaaS |

Access Control | RBAC per namespace | Independent RBAC per virtual cluster | Fully independent per cluster |

Environment Segregation | Logical (shared control plane) | Control plane-level separation | Full infrastructure separation |

Audit Trails | Shared API server logs (filtering required) | Separate API server logs per tenant | Fully independent logs |

Network Segmentation | Network policies | Network policies + host-level enforcement | Cluster-level boundaries |

Compute Isolation | Dedicated node pools | Dedicated node pools (Private Nodes) | Inherent |

Operational Overhead | Low | Medium | High |

Infrastructure Cost | Low | Low | High |

vCluster doesn't replace the other models. It fills a specific and important gap: compliance scenarios that require control plane-level isolation without the operational cost of fully dedicated clusters.

Where This Leaves You

In Kubernetes, compliance doesn't have to mean slow development cycles, escalating infrastructure costs or isolation models that won't stand up to audit inspection. However, it does require you to choose the right isolation model for your specific regulatory context.

If your compliance requirements demand environment-level segregation, separate control planes, independent audit trails and structural isolation boundaries, and if you need to deliver these efficiently across multiple tenants, vCluster is worth considering.

Get in touch with us to discuss how we can solve your compliance challenges in multi-tenant Kubernetes environments.

And if you want to see the technical deep dive into how vCluster solves multi-tenancy isolation in practice, read our other blog post: Solving Kubernetes Multi-Tenancy Challenges with vCluster.